Generative AI chatbot: the challenge of relevant responses

Les Grands Modèles de Langage (LLM) ont révolutionné le domaine de l’Intelligence Artificielle en permettant aux machines de générer du texte de qualité humaine. Cependant, ces modèles ne sont pas sans limites. Aujourd’hui, les chatbots basés sur l’IA générative peuvent produire des réponses incohérentes, peu pertinentes voire erronées, en particulier lorsqu’ils sont confrontés à des requêtes complexes ou à des contextes ambigus. De plus, les chatbots d’IA générative peuvent souffrir des « hallucinations », phénomène qui les poussent à communiquer des informations qui ne sont pas pertinentes ou même réelles. Ce manque de fiabilité peut entraîner une expérience utilisateur insatisfaisante et une perte de confiance dans cette technologie.

It's against this backdrop that fine-tuning and RAG(Retrieval Augmented Generation) have emerged as promising solutions to these challenges, by personalizing and refining interactions between users and AI. While the aim of both methods is to improve the relevance and quality of responses provided by chatbots, they are based on quite distinct strategies with uneven results.

Fine-tuning: a method for optimizing the results of generative AI

Understanding fine-tuning

For generative Artificial Intelligence chatbots to be truly effective, it is essential that they are trained or have knowledge of the data specific to their field of application. Fine-tuning is one of the common approaches used to improve LLM performance.

Fine-tuning is a machine learning technique that involves adjusting a pre-trained language model, by re-training on task-specific data. Rather than creating a model from scratch, fine-tuning takes an existing model, such as a Large Language Model (LLM) like GPT4 Turbo or an open source model like Mistral 7B, and specializes it to meet particular needs. Fine-tuning can improve the accuracy of model-generated responses, but may be limited by the quality and representativeness of the training data.

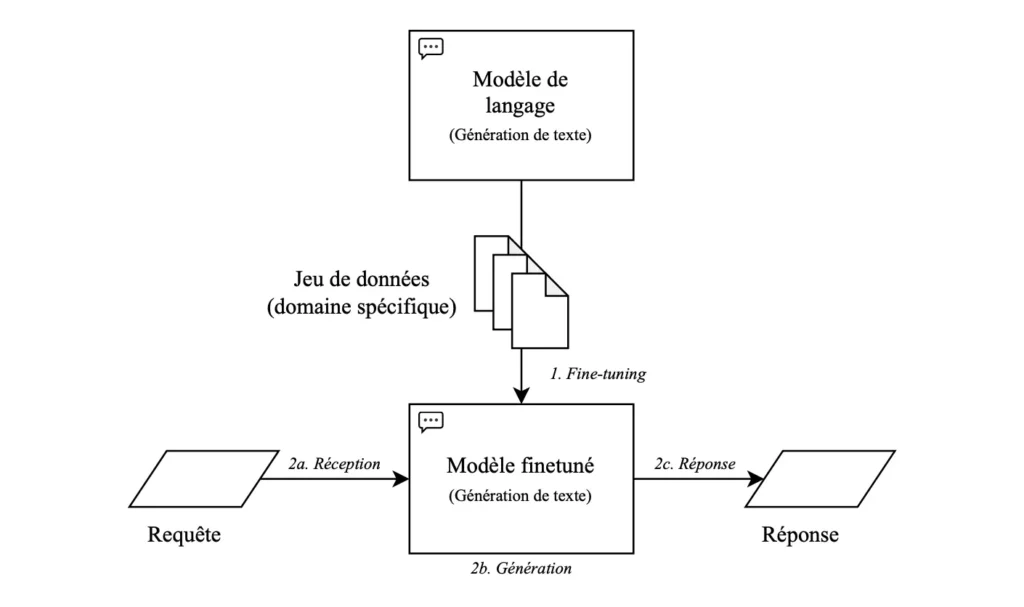

How fine-tuning works

Le processus de fine-tuning implique plusieurs étapes. Tout d’abord, on commence par sélectionner un modèle de langage pré-entraîné qui servira de base. Ensuite, on alimente ce modèle avec des données spécifiques à la tâche que le chatbot doit accomplir, comme des exemples de questions et de réponses. Ces données sont souvent annotées pour indiquer au modèle ce qui constitue une réponse correcte dans différents contextes.

Once the model has been trained on this specific data, it is ready to be used to generate answers based on user queries. Over time, the model can be fine-tuned and re-trained to improve its performance based on user feedback and new data.

Advantages and limitations of fine-tuning

The main advantage of fine-tuning is that it improves the relevance and accuracy of responses generated by the chatbot by having it "ingest" new knowledge. By fine-tuning a pre-trained model on task-specific data, the chatbot becomes capable of responding more accurately to user queries, even in complex or ambiguous contexts.

What's more, fine-tuning offers greater flexibility and scalability than creating models from scratch. Rather than having to train a complete model on exhaustive data, developers can use pre-trained models as a starting point, saving time and resources while achieving high-quality results. In short, fine-tuning is an effective method for improving chatbot relevance, and offers a pragmatic and relatively simple approach to improving the relevance of chatbot responses.

However, this approach has several limitations. Firstly, fine-tuning is often limited by the quality, quantity and representativeness of the training data. In addition, it can be ineffective for complex tasks or when faced with questions outside its area of specialization. The model may therefore generate inaccurate responses, revealing a limitation in its ability to adapt to unexpected queries. Finally, fine-tuning is a costly approach in financial and environmental terms, especially if the volume of data to be processed is large. This is where the RAG method comes in, an essential approach in the field of generative AI that integrates document search and selection mechanisms to provide more precise and reliable answers.

The RAG method: a revolutionary approach to generative AI

Understanding the RAG method

Retrieval Augmented Generation (RAG) is an enrichment method that aims to improve the relevance of chatbot responses by combining the best practices of retrieval and text generation. This method enables the model to produce responses enriched by updated or specific data found in an external database.

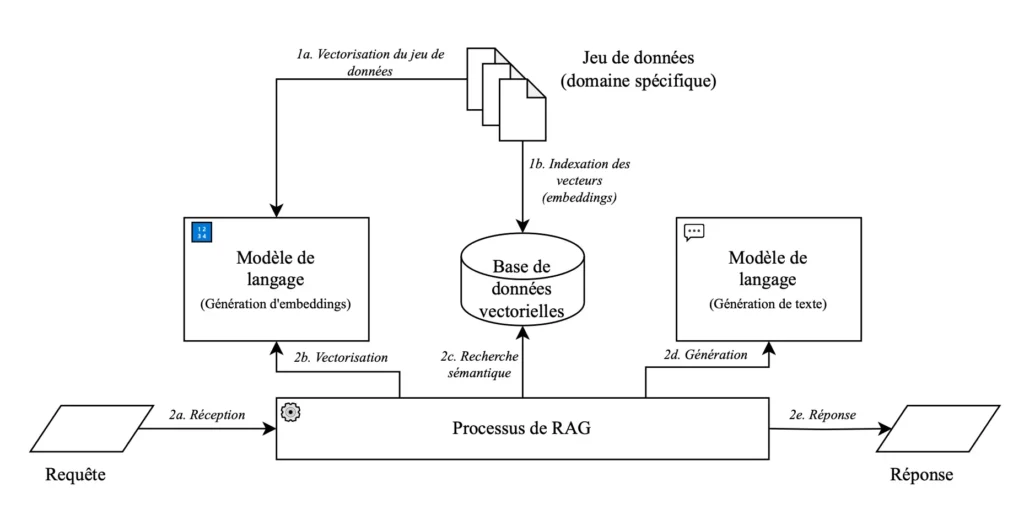

How the RAG method works

The first step in the RAG method is retrieval. Here, the chatbot searches a vast knowledge base for information relevant to the user's query. This search is usually performed using information retrieval techniques such as entity search, semantic search, or even advanced information retrieval algorithms.

Once the relevant information has been retrieved, it's time to identify the most important or relevant parts of the retrieved information, focusing on those aspects of the user's query that require a specific response. This step enables the chatbot to focus on the key elements, improving the quality and relevance of the response generated.

Finally comes the generation stage. At this stage, the chatbot uses the information retrieved and the parts highlighted to generate an appropriate response to the user's request. This text generation can be done using LLMs that are capable of producing coherent, natural responses.

The advantages of the RAG method for generative AI chatbots

The RAG method offers several significant advantages in the creation of smarter chatbots. Firstly, it produces more relevant and specialized responses, which improves the user experience and reinforces trust in the chatbot.

In addition, it allows greater flexibility in managing the responses generated. By adjusting the retrieval parameters it is possible to tailor chatbot behavior to the specific needs of their application, contributing to increased personalization and better adaptation to different usage scenarios.

Finally, the continuous integration of new knowledge helps the chatbot learn without the need to retrain models. By analyzing user interactions and constantly fine-tuning retrieval algorithms, the chatbot can improve over time, offering increasingly relevant and accurate responses.

Fine-Tuning versus RAG: Wikit's comparative study

Afin de permettre à ses clients de créer des chatbots les plus pertinents et performants possible, l’équipe R&D de Wikit et le laboratoire Hubert Curien (CNRS) ont réalisé une étude comparative des techniques de fine-tuning et de la RAG pour injecter de la connaissance dans les LLM en utilisant le modèle open source Mistral 7B.

To evaluate the responses generated by either technique, the R&D team used two metrics that can be applied to both fine-tuning and RAG, and which reflect the quality of LLM responses when applied to conversational agents. The first metric measured was relevance, to gauge the extent to which the response generated by the LLM was relevant to the question asked. The second metric measured was fidelity, which represents the factual veracity of the answer generated by the LLM. This metric can easily be linked to the detection of hallucinations.

The results of this comparative study highlighted the superiority of the RAG technique over fine-tuning. Indeed, whatever the dataset, RAG achieved the best results in terms of both relevance and fidelity.

Conclusion

In conclusion, although fine-tuning is an effective method in some cases, RAG stands out as the best option for improving the relevance of responses generated by generative AI chatbots. It offers a more flexible and scalable approach, enabling chatbots to adapt to a wide variety of contexts and queries. This method offers dynamic adaptation to user queries by drawing on a vast range of information available in real time, enabling chatbots to produce contextual, accurate and reliable responses. Using the RAG technique in the creation of generative AI chatbots ensures a better user experience and smoother interactions.

Our other resources

Découvrez comment l’IA de Wikit s’intègre nativement dans GLPI pour transformer vos techniciens en super-agents !

Read more

Explorez la puissance des systèmes multi-agents et comment cette architecture révolutionne l’efficacité opérationnelle.

Read more

Découvrez le rôle de l’agent autonome dans l’optimisation du parcours usager et l’automatisation intelligente des services !

Read moreAre you ready to harness the potential of AI?

Dive into the Wikit Semantics platform and discover the potential of generative AI for your organization!

Request a demo